Nvidia GTC: Everything We Learned About AI, Claws, CPUs and Robotics This Week

It's the last day of Nvidia GTC. This is your ultimate guide to everything the chipmaker has talked about here in San Jose.

Thursday is the final day of Nvidia's 2026 GTC, and it's been a long week. GTC is the chipmaker's biggest conference of the year, and 2026 was no exception. We got a lot of news about agentic AI, physical AI and robotics, so let's dive in.

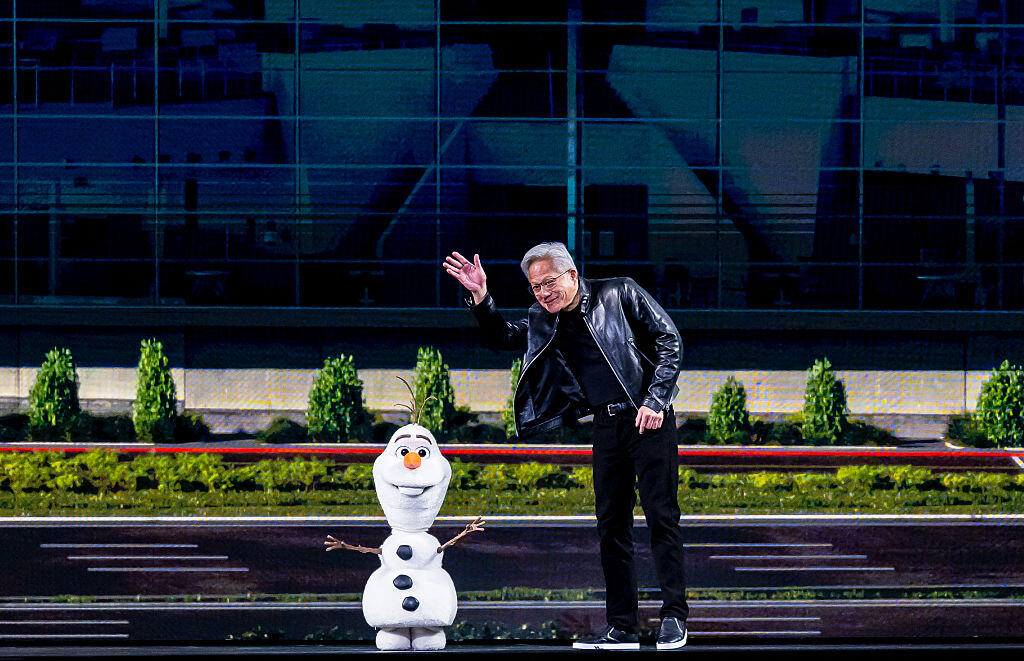

CEO Jensen Huang delivered a nearly three-hour-long keynote on Monday, setting the stage for the chipmaker's vision for 2026. There were some new releases, like the Vera CPU and DLSS 5. As the biggest company in the world (by market cap), it made sense that we heard about some new partnerships, including with T-Mobile, Adobe and Disney, whose Olaf droid, based on its friendly Frozen snowman, really wowed.

Huang said he expects to see $1 trillion in orders for its Blackwell and Vera Rubin systems through 2027, raising previous estimates. But it isn't as audacious coming from a company that's valued at an eye-watering $5 trillion and is the world's biggest company by market cap. Nvidia's chips are among the most in-demand resources for companies to build and maintain their AI models. Along with the massive level of spending in the tech industry, Nvidia's skyrocketing valuation has many financial and tech experts worried about an AI "bubble."

This year will likely be a turning point for AI stalwarts such as Nvidia. Tech companies are pouring cash into data center construction to handle demand for AI services and create enough energy to power their AI ambitions. Environmental and labor concerns abound, along with very real worries that AI disruptions in the workplace will leave many people without jobs.

Nvidia has been the leader in AI chip production and, therefore, the backbone of companies like Anthropic, Google and OpenAI. Everything the company says and does gives us insight into where this complex, still-evolving industry may be headed next.

Nvidia GTC wrapped

Today's the last day of Nvidia GTC. Let's recap all the biggest news.

- Nvidia and Disney are partnering to bring AI and robotics to life, like the Olaf droid.

- Nvidia dropped its AI agent platform, called NemoClaw.

- DLSS 5 is designed to upscale video game graphics, but some gamers aren't impressed.

- The Vera CPU is here.

- A teaser at what data centers in outer space could be -- and what's standing in the way of them.

It's been a long week looking at the future of accelerated computing, AI and robotics. We've got all the nitty-gritty details below. Thanks for following along!

Key takeaways from San Jose

CNET Senior Producer Jesse Orrall was on the ground in San Jose for GTC this week. Two of the biggest themes of the week were agentic AI and robotics -- two of his areas of expertise. But Nvidia's vision of the future fell flat at times.

"I enjoyed learning about quantum computers and meeting some new robots. But nothing I saw did anything to calm my anxiety about how AI will impact people's lives, for better and for worse," Jesse said.

The labor impacts of AI were top of mind. CEO Jensen Huang and his fellow tech company leaders have been adamant that AI won't lead to major job losses. Huang said his idealized version of Nvidia 10 years from now would have a team of human workers overseeing millions of AI agents. But studies, reports and the stock market have all shown that AI adoption in the workplace has been rocky at best.

"Jensen Huang also compared people's jobs changing due to AI to horses going from plowing the fields to some being worth $5 million apiece. The silliness of this comparison obscures the fact that the horse population sharply declined once cars became popular," Jesse said.

How Disney and Nvidia brought Olaf to life as a robot

CNET Senior Producer Jesse Orrall goes behind the scenes on how Disney and Nvidia brought the friendly Frozen snowman to life as a robot.

How much of an Nvidia fan are you?

CNET Social Media Manager Faith Chihil spotted this gem in the Nvidia gift shop this week. Would you spend $178 plus tax on a 100% cotton sweater with Jensen Huang on it?

Sweater Huang is, of course, wearing a leather jacket.

A full view of the sweater.

At least Nvidia didn't cheap out with a polyester blend sweater.

T-Mobile's AI bet

When we say AI is everywhere, we mean everywhere. Including the tech and infrastructure that keep our phones running.

T-Mobile is partnering with Nvidia to make sure its infrastructure, like cell towers, is ready for the AI age. It's a kind of physical AI, tangible things that bring AI to life. T-Mobile is using Nvidia's hardware to help run AI tasks on the edge, or locally on devices instead of in the cloud. Upgrades to cell towers and wireless networks can help make that happen and keep connections running smoothly.

"We tend to think of AI happening at massive data centers or, in reduced ways, on our personal devices, but a valuable middle ground is starting to become useful," said CNET's wireless carrier expert Jeff Carlson. "Literally, it's the locations between those two points -- the cell towers -- that are becoming processing centers to distribute AI's high computational load."

This matters a lot as newer AI becomes more complex and, therefore, more compute-intensive. Improvements to make hardware more efficient are one way tech companies are trying to keep up with demand, along with building more data centers. Running AI on network hardware, not solely in the cloud, also helps keep response time quick, which is important for things like self-driving cars and real-time traffic analysis.

"T-Mobile is betting that its fast 5G network, accelerated by Nvidia's low-latency hardware, will open up more ways to handle the next wave of demanding data," said Carlson.

Is OpenClaw 'the next ChatGPT?'

In an interview with CNBC's MadMoney, Huang said OpenClaw, the open-source AI agent that took over the AI industry this year, is "definitely the next ChatGPT." Cue hysteria.

Every AI executive and their mother has been chasing the elusive title of "the next ChatGPT" or saying their product is having its "ChatGPT moment." It's a phrase so commonly used it's lost most of its meaning. But it's based on AI companies' real desires to have their model or service break through the everyday humdrum to bring back the magic and horror of the early days when ChatGPT felt like a true technological breakthrough. But it's one thing for a founder or marketer to claim something is the next ChatGPT; it's quite another for a powerhouse like Huang to crown a product with that particular title.

OpenClaw is one of the only AI products to have an impressive, very viral moment in recent memory. The AI agent can answer emails, schedule appointments and basically run your entire computer, if you let it. (You might not want to do so due to serious privacy and security concerns.) Part of the mystique of OpenClaw is its chaotic origin story, changing names multiple times, spurring on crypto scammers and more. But OpenClaw has moved beyond its early snafus and now, as part of OpenAI, is poised to lead the agentic AI revolution.

Huang: Stop worrying about robots taking your job

One of the biggest concerns the AI revolution has created is the fear that automation -- through generative AI, robotics and more -- will replace human workers and eliminate jobs. Perhaps unsurprisingly, Huang, as leader of a huge AI company, isn't as worried as the rest of us.

Huang started by pointing out the workforce shortages in manufacturing, an industry that's vital to AI -- you can't have millions of agents working without a lot of data centers powering them.

"Employment is very high, and yet many companies don't have enough labor," Huang said. "Robots will fill in that gap."

Huang theorizes that as robotic automation fills in these gaps, the world's economies will grow, which will lead companies to hire more. But like a lot of AI and labor experts have said, our jobs in an AI world may be different. Instead of doing tasks yourself, you may be managing or supervising a robot or AI agent, Huang said.

But as with any technological revolution, there will be job losses because of AI. Legacy tech companies know this -- it's why tech stocks for companies such as Salesforce and Workday fell dramatically last month as Anthropic's AI proved it could massively disrupt their operations.

We have to keep in mind that Huang clearly has a vested interest in ensuring AI doesn't crash the economy and eliminate all roles. If I were the CEO of the world's biggest company, I wouldn't be so worried about AI taking my job, either. In a clear, if unrelatable, moment, Huang talked about how AI has made us busier than ever.

"When was the last time you sat on a rocking chair on your porch and drank a glass of lemonade and watched the sun go down?" he asked, only somewhat hyperbolic. "We're busier than ever."

DLSS 5: Backlash and Nvidia's response

The AI-powered optimization brings forward smaller details and creates more lifelike appearances.

Nvidia's first big announcement in its Monday keynote was DLSS 5, the next generation of its video game graphics optimization software. Deep Learning Super Sampling is designed to make gaming visuals more refined. But the response to the teaser samples of Resident Evil Requiem wasn't all positive.

Some gamers are concerned that the AI-powered tool would create an AI slop aesthetic, flattening individual games' style and creating a kind of "yassified" character appearance.

"Everything about this is a betrayal of these games' artistry," said YouTuber The Sphere Hunter in a post on X Monday. "Painting over handcrafted, intentional 3D art with shiny, wrinkly, sunken-in, porous, puckered, fraudulent, filtered nonsense is deeply disrespectful. If you want this, just watch gen-AI videos all day."

Nvidia CEO Jensen Huang addressed the backlash in a press Q&A on Tuesday. He said people worried that DLSS 5 would make graphics appear worse or homogenous were "completely wrong." Huang said that DLSS 5's technology is "conditioned by the ground truth of the game," so the generative AI should match its style.

But with creative gen AI continuing to be so controversial, especially for professional creators like game developers, this debate is likely to drag on for years.

MotorTrend names Jensen Huang 2026 person of the year

MotorTrend presents Jensen Huang with its 2026 person of the year award.

While much of GTC has been focused on AI agents and chips, one publication is highlighting Nvidia's role in autonomous vehicles. Automobile magazine MotorTrend presented Huang with its 2026 Person of the Year award on Tuesday during a Q&A session with members of the press.

"Nvidia now has the intelligence needed to scale up self-driving, software-defined vehicles with over-the-air update capability. This is the future of mobility," MotorTrend said in a statement.

The chipmaker recently released a family of AI models called Alpamayo, specifically designed to be integrated into the software that runs self-driving cars. It's focused on real-world scenarios, using reasoning to make decisions quickly. The goal is to transform auto software from data collecting to something that's capable of making data-informed decisions and actions.

As MotorTrend put it, Alpamayo "aims to help automakers develop vehicles that perceive, reason, and act like humans to solve problems, like how to navigate a broken traffic light at a busy intersection without previous experience."

Self-driving cars like those from Waymo or Zoox are becoming less of an oddity and more of a mainstream phenomenon, as CNET's Abrar Al-Heeti reports. AI and AI-based software -- and Nvidia hardware -- are two powerful forces driving its development.

Nvidia in 10 years: 75K employees, 7.5M AI agents

Jensen Huang during a press Q&A during Nvidia GTC 2026.

In a Q&A session with members of the press, Huang described his vision for Nvidia in 10 years from now plainly: 75,000 employees working with 7.5 million AI agents.

Nvidia only employs 36,000 people, according to a 2025 estimate. And AI has certainly had a tumultuous effect on the job market, from layoffs to prioritizing AI-savvy candidates. But the bigger story in Huang's estimate is the breakdown -- in that vision, each human employee would ostensibly be working with 100 AI agents. That human-to-agent ratio is one of the highest we've heard, and it's a testament to Nvidia's belief that agentic AI will be able to handle swaths of work.

Catching up on Nvidia news? We've got you

Nvidia kicked off its GTC conference yesterday with a keynote from CEO Jensen Huang. There were a ton of announcements dropped in the nearly 3-hour keynote. Our experts identified the three biggest takeaways from Nvidia GTC. Here's what you need to know.

Disney and Nvidia are teaming up to combine AI and robotics, resulting in an adorable Olaf droid that joined Huang on stage. Nvidia is also diving deep with a new platform for AI agents, called NemoClaw. Yes, it's inspired by the viral open-source AI agent OpenClaw -- founder Peter Steinberger made a cameo at the preshow, too. Huang also briefly discussed the possibility of data centers in outer space and the biggest challenge to making that a reality. It also dropped DLSS 5, an AI-powered upscaling tool for gamers.

Keep scrolling to see our play-by-play updates during the keynote, and check out our coverage on Instagram and TikTok.

And that's all, folks!

Knowing it's probably impossible to top Disney robots, Huang closed the keynote shortly thereafter. The AI-generated music video is certainly something, though.

OpenClaw's lobster logo got a cymbals solo in Nvidia's music video finale.

We got a lot of information in the nearly three-hour keynote, including a robotics partnership with Disney, a new agentic toolkit for developers and a teaser about plans to build data centers in space. Notably absent from today's keynote was the rumored new N1 and N1X chips, but it's possible we will see them released later this year.

Thanks for following along, and be sure to check back here as we continue to unpack what all of today's announcements mean for the future.

Nvidia announces NemoClaw reference stack

Nvidia's NemoClaw enables users to create claws with added layers of privacy and security.

Nvidia has announced NemoClaw, a stack for the OpenClaw agent platform to create autonomous AI agents, or "claws." NemoClaw uses the Nvidia AI Agent Toolkit to optimize OpenClaw with a single command, installing OpenShell for open models and a sandbox for added privacy and security.

"The OpenClaw 'event' cannot be understated," Huang said. "This is as big of a deal as HTML. This is as big of a deal as Linux."

Nvidia and Disney are making an Olaf robot

It's by far the best part of the keynote so far -- Nvidia and Disney are teaming up to create an Olaf droid. The robot version of Disney's Frozen snowman made an appearance on stage with Huang.

Nvidia brought out an example of its Olaf droid.

CNET's Corinne Reichert has all the details on how the project came to be.

"The robotic snowman runs simulations on Nvidia GPUs and is powered by Nvidia chips. Olaf was brought to life using the Newton Physics Engine, an open-source system developed by Nvidia, Google DeepMind and Disney Research that enables high-performance robot simulations to run quickly on GPUs," Corinne reports.

Check out her full story, which includes which Disney theme parks may see Olaf droids wandering around.

Nvidia is making a computer for space

Nvidia is not content to put its chips in most every data center on the Earth's surface. The company is making a computer for space. Huang called it Vera Rubin Space-1, and said Nvidia and its partners are already in development. It is "very complicated to do so," he said.

The big complication? Just as it is on the Earth's surface, it's how to avoid overheating.

"In space, there's no conduction, there's no convection, it's just radiation," Huang said. "So we have to figure out how to cool these systems out in space."

Nvidia upgrades its Vera Rubin system for agentic AI

Nvidia's Vera Rubin data center platform helps AI companies build and deploy their AI tools. Vera Rubin is getting some new updates to help it handle agentic AI, which are more compute-intensive tasks.

The company introduced a new Vera CPU, which it says "delivers results with twice the efficiency and 50% faster than traditional CPUs."

Jensen Huang with a Vera Rubin rack, which is used in data centers.

Named for the astronomer who discovered dark matter, we first saw the Vera Rubin system at CES in January.

An inflection point for AI inference

Nvidia CEO Jensen Huang on stage talking about inference.

Computing demand has risen dramatically in the past few years, thanks to AI, but the biggest driver now isn't training or creating those AI models, but operating them, Huang said. This is called inference -- when an AI model addresses new information and applies its existing model to do or produce something new. Agentic AI relies heavily on inference because the models have to adjust constantly to new information, and that means demand for the computing power to handle that load is growing dramatically.

"Finally, AI is able to do productive work, and therefore the inflection point of inference has arrived," Huang said. "AI now has to think. In order to think, it has to inference. AI now has to do. In order to do, it has to inference. AI now has to read. In order to read, it has to inference."

'Token king'

The Nvidia CEO joked that he's now the "token king."

"Inference is your new workload, tokens are your new commodity ... you want to make sure that the architecture is as optimized as you can in the future," Huang said. "Intelligence will be augmented by tokens."

Tokens are the building blocks of AI, individual units of data that an AI model can process. Huang said Nvidia has the lowest cost per token in the world, making him a kind of "token king."

New DLSS 5 AI-powered upscaling for gaming

The AI-powered optimization brings forward smaller details and creates more lifelike appearances.

Nvidia introduced DLSS 5, an update to its AI-powered upscaling and optimization software for games. It seems to add element-based understanding -- it links characteristics to surfaces (like skin) and maybe objects in order to render more consistent, more realistic lighting and detail throughout a game -- by analyzing the initial content. That's in contrast to the current pixel-by-pixel and frame-by-frame understanding of a scene.

It's slated to roll out starting this fall. It's not clear which GPUs it will support, but it's likely that it's optimized for the Blackwell architecture of the RTX 50-series.

Nvidia GTC keynote begins

Nvidia's GTC keynote is officially rolling. Follow along here for updates, and you can watch the stream live on YouTube.

Jensen Huang opens the Nvidia GTC keynote.

Possible new Nvidia chips for Windows computers?

We're certain to get some updates on the performance of Nvidia chips during today's keynote, but could we also get new ones? The Verge and The Wall Street Journal have reported that Nvidia is building two new chips, called N1 and N1X, specifically for Windows computers. These chips would fuel Nvidia's return to the consumer market, potentially in Dell and Lenovo computers.

Nvidia's partnership with MediaTek would likely play a big role in the development of these chips. CNET's computing expert Matthew Elliott explains that these new silicon chips would be "a system-on-chip," meaning they would integrate central, graphics and neural processing units. It will be based on Arm architecture, like Apple and Qualcomm incorporate -- not the x86 architecture used by competitors AMD and Intel, which are in many Windows computers.

"Unlike high-end gaming and creator laptops with dedicated Nvidia GeForce RTX GPUs, laptops with this new Nvidia-designed, MediaTek-manufactured SoC are expected to be thin and light and long running," Elliott said.

Why Nvidia's on-device AI plan matters

Whether you run your AI on-device or in the cloud probably isn't something you think about often. But it should be. There are a lot of benefits to running AI models locally, and Nvidia hardware is helping make that easier for you.

CNET Managing Editor Jon Reed got a close-up look at Nvidia's Project G Assist at CES in January. It's a chatbot-like interface that runs on your device and lets you easily adjust your computer's settings by talking out loud. It's also the tech behind AI chatbot assistants Nvidia is developing for gamers.

"Complex strategy games like Total War: Pharaoh can be particularly challenging for new players to grasp, given that they often come with extensive documentation and intricate mechanics that warrant their own encyclopedias," Reed wrote. "This AI adviser, which runs on-device rather than in the cloud, can answer the player's questions about actual in-game events using the context of all that information."

On-device AI isn't perfect -- it takes up a signification portion of your device's memory, for example. But it's part of a growing movement to give developers and AI users a more secure, inexpensive and quick way to access AI tools without having to rely on AI companies and their data centers. Nvidia's chips in hardware like laptops are one way to make locally running AI easier, along with its more powerful desktop supercomputers, like the DGX Spark, that can be used by individual users and small- to medium-sized companies for more compute-intensive tasks.

OpenClaw creator at GTC preshow

OpenClaw creator Peter Steinberger featured in the Nvidia GTC preshow.

If there's one thing that's absolutely taken over the AI world this year, it's OpenClaw. This viral, open-source AI agent wowed fans with its agentic AI abilities, able to complete tasks independently and run your entire digital life, if you want that. OpenClaw creator Peter Steinberger appeared in a video interview with Huang, airing on the screen inside the theater at the GTC preshow. His lobster headband is a reference to OpenClaw's lobster mascot/logo.

OpenAI acquired OpenClaw quickly after it went viral, with Meta picking up its agentic AI social media spin-off, Moltbook.

Live from San Jose!

CNET and PCMag are ready for Nvidia GTC's 2026 keynote.

CNET Social Media Manager Faith Chihil and Jacqueline Goldblatt from our sibling site PCMag are inside the SAP Center waiting for the keynote to begin at 11 a.m. PT.

How to stream Nvidia GTC's keynote

CEO Jensen Huang will be speaking today about the "latest breakthroughs in AI and accelerated computing." Nvidia GTC's keynote is scheduled to begin at 11 a.m. PT (2 p.m. ET, 6 p.m. UK) on Monday, March 16. You can stream the event on YouTube.